Developer platform GitLab today announced a new AI-powered security feature that uses a large model language to explain potential vulnerabilities to developers, with plans to extend this to automatically resolve those vulnerabilities with AI in the future.

Earlier this month, the company announced a new experimental tool that explains developer code — similar to the new security feature GitLab announced — and a new experimental feature that automatically abstracts issue feedback. In this context, it’s also worth noting that GitLab has already launched a code completion tool, now available to GitLab Ultimate and Premium users, and the ML-based Suggested Reviewers feature last year.

Image credits: Jet Lab

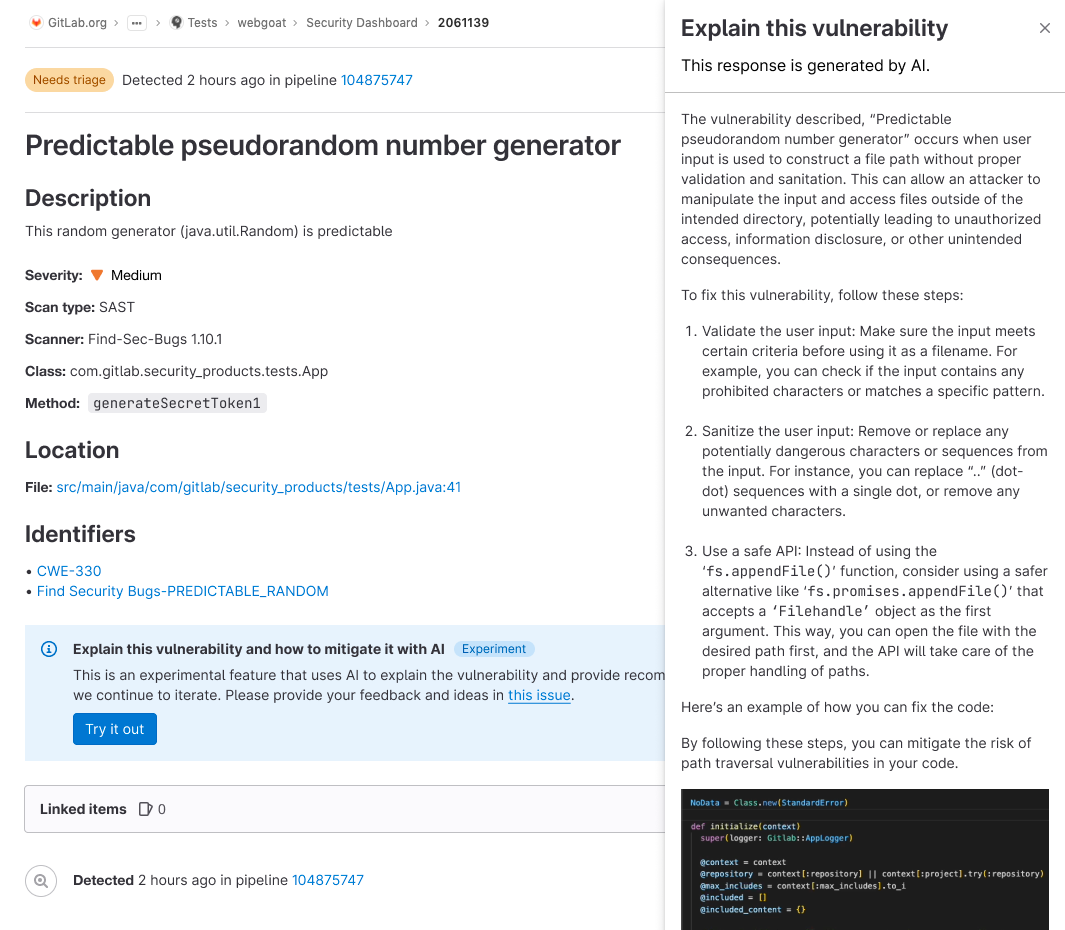

The new Explain This Vulnerability feature will try to help teams find the best way to fix the vulnerability in the context of a codebase. It is this context that makes the difference here, as the tool is able to combine basic information about the vulnerability with specific insights from user code. This should make addressing these issues easier and faster.

The company calls its overarching philosophy behind adding AI features “speed with firewalls,” that is, combining AI code and test generation powered by the company’s full DevSecOps platform to ensure that everything AI generates can be safely deployed.

GitLab has also stressed that all of its AI features are built with privacy in mind. “if we We are influential your thinker Property, any He is codewH We are Just Going to He is send that to a model that He is GitLabs or He is inside GitLab clouds architecture,” David DeSanto, Head of Procurement at GitLab, told mehe Why this is so important to us – this is due to project DevSecOps – that is our Client We are severely Organize. our Client We are usually very protection And compliance Conscious, And we He knew we could no Builds a code suggestions Solution that required we send He. She to a third-party Amnesty International. He also noted that GitLab will not use the data of its customers to train its models.

DeSanto stressed that GitLab’s overall goal for its AI initiative is to increase efficiency by 10x — not just individual developer efficiency but the overall development lifecycle. As he rightly notes, even if you could 100 times the productivity of a developer, subsequent shortcomings in revising that code and putting it into production could easily negate it.

“if development He is 20% to the life turn, until So we Make that 50% more effective, you no truly Going to Feel DiSanto said. “now, if we Make the protection difference, the operations difference, the compliance teams also more effective, then like a organized, You are on Going to be seen He. She.”

For example, the Explain This Code feature has turned out to be very useful not only for developers but also for QA and security teams, who now get a better understanding of what they have to test. This was, for sure, the reason why GitLab expanded to explain the vulnerabilities as well. In the long term, the idea here is to build features to help these teams automatically generate unit tests and security reviews – which will then be integrated into the overall GitLab system.

According to a recent DevSecOps report from GitLab, 65% of developers are already using AI and machine learning in their testing efforts or plan to do so within the next three years. Already, 36% of teams use an AI/ML tool to check their code before code reviewers see it.

“Given resource constraints DevSecOps teams face, automation and AI have become a strategic resource,” GitLab’s Dave Steer wrote in today’s announcement. “Our DevSecOps platform helps teams close critical gaps as they automate implementing policies, applying compliance frameworks, running security tests using GitLab’s automation capabilities, and making AI-powered recommendations — freeing up resources.”